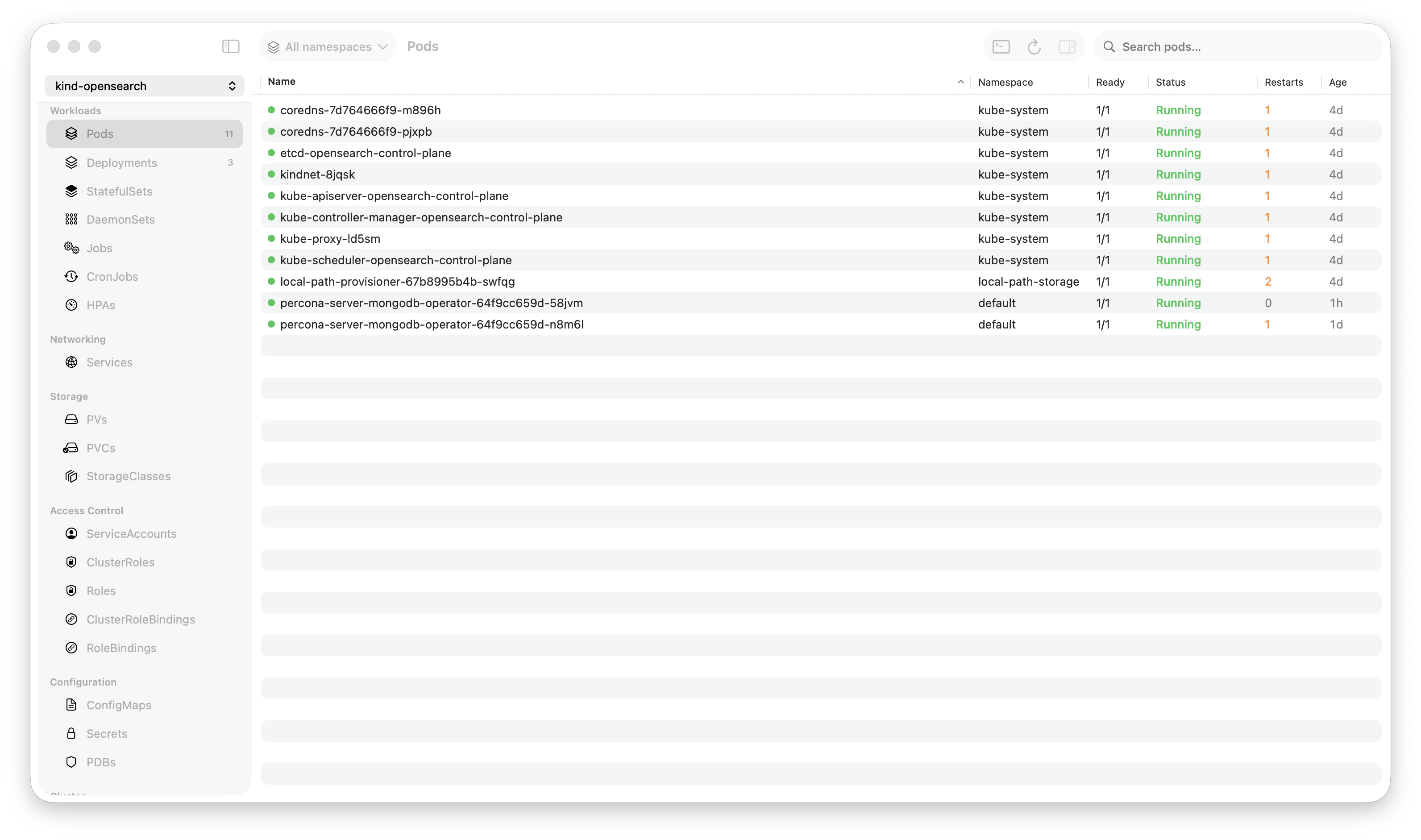

Krust is a native Kubernetes desktop app for production operations on macOS. If you are evaluating tools, compare Features and start with Quick Start.

I needed a log viewer for a Kubernetes GUI that could hold 200,000 lines in memory, search the full buffer in under 15ms, stream from multiple pods concurrently, and parse structured JSON/logfmt logs on the fly — all without blocking the main thread.

Here’s how I built it in Rust, and the design decisions that made it fast.

The Constraints

This log viewer runs inside Krust, a native Kubernetes desktop app for macOS. The Rust core handles all Kubernetes API communication and data processing, and a Swift/AppKit UI renders the results. They talk through UniFFI-generated bindings.

The constraints that shaped every decision:

-

Never block the main thread. The UI must stay responsive. Log parsing, searching, and streaming happen on Tokio worker threads. The main thread only receives pre-processed results via callbacks.

-

Bounded memory. Kubernetes pods can emit thousands of lines per second. Unbounded storage means unbounded memory. The buffer must have a hard ceiling.

-

Fast search without indexing. Users search during incidents — “find me the last error.” It needs to be instant. But building and maintaining an inverted index for a streaming, evicting buffer adds complexity. Can we get away with brute force?

-

Cross-language FFI. Results cross from Rust into Swift via callback traits. The boundary must be

Send + Sync, and we can’t send anything that requires Rust-side cleanup (no borrowed references, no complex lifetimes).

Layer 1: Async Log Streaming

Kubernetes exposes pod logs via an HTTP streaming endpoint. The kube-rs crate wraps this as an AsyncRead stream. Each line arrives as it’s emitted by the container.

let stream = api.log_stream(&pod_name, ¶ms).await?;

let mut lines = BufReader::new(stream).lines();The streaming loop uses tokio::select! with a cancellation channel:

let (cancel_tx, mut cancel_rx) = tokio::sync::watch::channel(false);

rt().spawn(async move {

loop {

tokio::select! {

biased;

_ = cancel_rx.changed() => break,

item = lines.next() => {

match item {

Some(Ok(line)) => { /* parse and store */ },

Some(Err(_)) => break,

None => break,

}

}

}

}

});Why biased Matters

Without biased, tokio::select! randomly picks which branch to poll first. This means a cancelled stream might process several more lines before noticing the cancellation signal. With biased, the cancellation branch always has priority. When the user closes the log panel, the stream stops immediately — no stale lines processed, no wasted work.

Why watch Over oneshot

A oneshot channel can only fire once and is consumed on receive. A watch channel can be checked repeatedly in a loop without consuming the signal. Since the streaming loop runs indefinitely, we need a signal that persists across iterations. The watch channel is perfect: the sender sets it to true, and every subsequent changed() call sees the new value.

The caller gets back a LogHandle with the sender:

pub struct LogHandle {

cancel_tx: tokio::sync::watch::Sender<bool>,

}

impl LogHandle {

pub fn cancel(&self) {

let _ = self.cancel_tx.send(true);

}

}Drop semantics handle cleanup — when LogHandle is dropped, the sender is dropped, which causes cancel_rx.changed() to return an error, breaking the loop. Belt and suspenders.

Layer 2: Structured Log Parsing

Modern services emit structured logs. A raw line like:

{"level":"error","msg":"connection refused","service":"payments","latency_ms":2340}…is hard to read. The viewer needs to parse it, extract key-value pairs, detect the log level, and present it in a compact format.

The Three-Level Strategy

Every incoming log line runs through a tiered parser:

Tier 1: JSON — If the line (after any timestamp prefix) starts with {, try serde_json::from_str(). Walk the JSON object, extract key-value pairs, flatten one level of nesting (res.statusCode), skip noise fields (timestamp, pid, hostname, version — 11 fields total), and drop values over 500 bytes (stack traces).

Tier 2: Logfmt — If JSON fails, try logfmt (key=value key2="quoted value"). This is a hand-written byte-level parser, not regex. It walks the bytes with index arithmetic, handles quoted values with escapes, and requires at least 2 key-value pairs to qualify (to avoid false positives on random text).

Tier 3: ASCII fallback — If neither format matches, scan the first 120 bytes for log level keywords (error, fatal, warn, debug, etc.) using case-insensitive byte comparison.

Why Hand-Written Logfmt?

Regex would work but adds overhead on the hot path. Every incoming log line — potentially hundreds per second — hits this parser. The hand-written version:

- Zero allocations until a match is confirmed (just index arithmetic)

- No regex compilation overhead

- Handles edge cases (escaped quotes, standalone level tokens) that a simple regex wouldn’t

- Runs in ~200ns per line on M-series chips

The Compact Format Trick

Here’s the key memory insight. Parsing produces a compact representation:

level\x1Fmsg\x1Econnection refused\x1Fservice\x1Epayments\x1Flatency_ms\x1E2340(\x1F = unit separator between key-value pairs, \x1E = record separator between key and value)

This compact string is sent to Swift immediately via the callback for display. But it’s not stored in the ring buffer. Only the raw line and a few bytes of metadata are stored:

struct StoredLine {

raw: String, // Original line, for search

level: u8, // 0=error..4=trace, 255=unknown

ts_end: u16, // Byte offset where timestamp ends

}Why discard the compact format? Because most lines are displayed once and never looked at again. Only search results need the compact format — and there are far fewer of those. Re-parsing a search result takes ~200ns per line. Storing compact for all 100K lines would cost ~40% more memory for data that’s used <1% of the time.

This asymmetric caching reflects a key insight: optimize for the common case (streaming), not the rare case (searching). Stream display is the hot path. Search is the warm path. Storage is the constraint.

Layer 3: The Ring Buffer

The core data structure is a VecDeque<StoredLine> with a hard cap:

struct Inner {

lines: VecDeque<StoredLine>,

base_number: i64,

max_lines: usize, // 100,000

}

pub struct LogStore {

inner: RwLock<Inner>,

}Why VecDeque?

I considered three options:

-

Fixed-size array with manual wrapping — Classic ring buffer. O(1) everything. But requires

unsafefor uninitialized memory, manual index math, and can’t grow. Complexity for no real benefit when VecDeque exists. -

Vec with rotation — Push to back, shift front when full.

Vec::remove(0)is O(n) — a non-starter for 100K elements. -

VecDeque — Amortized O(1)

push_backandpop_front. Contiguous-ish memory layout (two slices). No unsafe code. Standard library, well-tested.

VecDeque wins on simplicity. The performance difference between VecDeque and a hand-rolled ring buffer is negligible for this use case — the bottleneck is parsing and I/O, not buffer operations.

Line Number Tracking

When the buffer evicts old lines, search results still need to report accurate line numbers (position in the original stream, not the buffer):

fn add(&self, line: StoredLine) {

let mut g = self.inner.write().unwrap_or_else(|e| e.into_inner());

if g.lines.len() >= g.max_lines {

g.lines.pop_front();

g.base_number += 1;

}

g.lines.push_back(line);

}If 1,000,000 lines have been received and 100,000 are stored, base_number is 900,000. A match at buffer index 42 reports line number 900,042. The user sees monotonically increasing line numbers regardless of eviction.

Lock Poisoning Recovery

Production systems can’t afford to crash because a previous operation panicked while holding a lock:

let g = self.inner.read().unwrap_or_else(|e| {

eprintln!("krust: LogStore read lock poisoned, recovering");

e.into_inner()

});If a panic occurs while holding the write lock, Rust’s RwLock becomes poisoned. Most code uses .unwrap() or .expect(), which propagates the panic. Krust recovers the poisoned guard and continues operating. The data might be in an inconsistent state, but a degraded log viewer is better than a crashed application.

This is tested:

#[test]

fn log_store_survives_poisoned_lock() {

let store = LogStore::new();

let _ = std::panic::catch_unwind(AssertUnwindSafe(|| {

let mut _g = store.inner.write().unwrap();

panic!("intentional poison");

}));

assert_eq!(store.total_count(), 0); // Still works

}Search: Brute Force That’s Fast Enough

The search implementation is deliberately simple: linear scan, backward, with a first-byte prefilter.

pub fn search(&self, query: String, limit: i64) -> Vec<LogRow> {

let q_lower = query.to_ascii_lowercase();

let q_bytes = q_lower.as_bytes();

let mut results = Vec::with_capacity(limit.min(total));

for i in (0..total).rev() {

if contains_ignore_ascii_case(&g.lines[i].raw, q_bytes) {

let reparsed = parse_line(g.lines[i].raw.clone());

results.push(LogRow { line_number: g.base_number + i, .. });

if results.len() >= limit { break; }

}

}

results.reverse();

results

}Why Backward?

During incidents, you want the most recent matches. “Show me the last 50 errors” should find them immediately, not scan 100K lines to find all errors and then return the last 50. Backward scanning finds recent matches first and stops at limit.

The final reverse() is O(limit), not O(n). Cheap.

The Prefilter

The contains_ignore_ascii_case function uses bitwise ASCII lowercase conversion:

fn contains_ignore_ascii_case(haystack: &[u8], needle: &[u8]) -> bool {

let first = needle[0];

for i in 0..=(haystack.len() - needle.len()) {

// Bitwise lowercase: set bit 5 if uppercase ASCII

let b = haystack[i];

let bl = b | (((b >= b'A') && (b <= b'Z')) as u8 * 0x20);

if bl == first {

// First byte matches — verify the rest

let mut ok = true;

for j in 1..needle.len() {

let hb = haystack[i + j];

let hbl = hb | (((hb >= b'A') && (hb <= b'Z')) as u8 * 0x20);

if hbl != needle[j] { ok = false; break; }

}

if ok { return true; }

}

}

false

}The trick: b | 0x20 converts uppercase ASCII to lowercase in one instruction (no branch, no lookup table). The first-byte check skips ~95% of positions without entering the inner loop.

Why Not an Index?

100K lines × ~200 bytes average = ~20MB of text. Memory bandwidth on Apple Silicon is ~200GB/s. Scanning 20MB takes ~0.1ms in theory. In practice, with the overhead of function calls, cache misses, and the prefilter logic, it’s 5-15ms. Fast enough.

An inverted index would bring search under 1ms, but adds:

- Tokenization logic (what counts as a token?)

- Index update on every insertion (hot path overhead)

- Index eviction when buffer evicts (complexity)

- Memory overhead for the index itself

For a log viewer where 15ms search is indistinguishable from instant, the index isn’t worth the complexity. If the buffer grows to 1M lines someday, I’ll reconsider.

FFI: Crossing the Rust-Swift Boundary

The callback pattern uses UniFFI traits:

#[uniffi::export(with_foreign)]

pub trait LogCallback: Send + Sync {

fn on_line(&self, raw: String, compact: Option<String>, level: u8, ts_end: u16);

fn on_error(&self, message: String);

}Swift implements this trait. Rust calls callback.on_line() from a Tokio worker thread. UniFFI handles the cross-language dispatch.

Key constraints:

Send + Syncrequired — the callback is moved into a Tokio task (spawnrequiresSend)- No borrowed references — everything crossing the FFI boundary is owned (

String, not&str) Arcwrapping — the callback isArc<dyn LogCallback>for shared ownership across the async boundary

On the Swift side, on_line dispatches to the main thread for UI updates. The parsing work (which already happened on the Tokio thread) is done — Swift just appends the pre-formatted string.

Shared Runtime, Shared Connections

All log streams share a single Tokio runtime:

fn rt() -> &'static Runtime {

static RT: OnceLock<Runtime> = OnceLock::new();

RT.get_or_init(|| {

Builder::new_multi_thread()

.enable_all()

.build()

.expect("Tokio runtime")

})

}And a single cached kube::Client:

static CLIENT_CACHE: OnceLock<RwLock<Option<kube::Client>>> = OnceLock::new();The kube::Client uses HTTP/2 multiplexing under the hood. One TCP connection to the API server handles all concurrent log streams. 8 pods streaming simultaneously = 8 HTTP/2 streams on 1 connection. No connection pool exhaustion, no TLS handshake per stream.

The RwLock around the client cache allows many concurrent readers (each log stream cloning the client) with no contention. The write lock is only taken once — on first connection.

What I’d Do Differently

Configurable buffer size. 100K lines is a good default, but power users debugging long-running issues want more. This should be a setting.

Regex search. Currently it’s substring-only. Regex would be useful for patterns like status=[45]\d{2}. The concern is that regex on 100K lines might exceed the 15ms budget — but regex crate with SIMD should handle it. Worth benchmarking.

Per-pod ring buffers → merged view. Currently, multi-pod aggregation happens at the Swift layer. Moving it into Rust with a merge-sorted view across per-pod buffers would enable cross-pod search and consistent chronological ordering. This is the next major improvement.

Numbers

Measured on an M2 MacBook Pro, production EKS cluster:

| Operation | Time |

|---|---|

| Single-pod stream startup | ~300ms |

| 8-pod concurrent stream startup | ~800ms |

| Substring search, 100K lines | 5-8ms |

| Structured log parse (JSON) | ~500ns/line |

| Structured log parse (logfmt) | ~200ns/line |

| Buffer insertion (with eviction) | ~50ns |

| Memory (100K lines stored) | ~25MB |

The entire log viewer — streaming, parsing, storing, searching — runs on Tokio worker threads. The Swift main thread does nothing but append pre-formatted attributed strings to an NSTextView. Result: zero UI stuttering, even at hundreds of lines per second across 8 concurrent streams. See performance benchmarks for more details.

Try It

Krust is a native Kubernetes desktop app for macOS. The log viewer described here is one component of a larger system that manages 27 Kubernetes resource types.

brew install vanchonlee/tap/krustThe Rust core is closed source, but I’m happy to discuss the architecture. If you’re building something similar — real-time data streaming with Rust + native UI — the patterns here (watch-based cancellation, asymmetric caching, brute-force search with prefiltering, poisoning recovery) are broadly applicable.

Built with Rust (kube-rs, tokio, serde_json, UniFFI) and Swift (AppKit, SwiftUI). Feedback welcome — especially if you’ve solved similar problems differently.